Start and Stop Azure WVD (Windows Virtual Desktop) Session Hosts

11 Dec 2020

Automate Azure SQL Database Lifecycle

28 Jan 2021

Using AWS EC2 is almost always cheaper than managing on-premises hardware.

But those EC2 costs can still stack up, and every dollar counts.

Here are 11 surefire ways to slash your AWS EC2 bill.

1. Rightsize your EC2s

The cost of running an EC2 instance for an hour can range from nothing to more than US$25, depending on which type (e.g. t2.small, t3.large) you choose and how you configure it.

During quiet times, you usually want to deploy the least expensive EC2 instances that still meet your needs. During busier times, you usually want to increase the number of instances you have deployed, rather than the specs of your instances, as starting and stopping instances on demand is a far simpler process than relaunching them with different specs. This is captured by the popular notion in the devops community of treating instances like “cattle not pets”.

| Scenario | Cattle | Pets |

|---|---|---|

| Server status | One of many e.g. “server-0XX2B” | Unique e.g. “The Beast: Do Not Touch” |

| When sick | Kill and replace | Nurse back to health |

| When demand grows | Scale out a.k.a. “horizontal scaling” i.e. launch more | Scale up a.k.a “vertical scaling” i.e. resize and relaunch |

There are 2 ways of rightsizing to reduce EC2 costs:

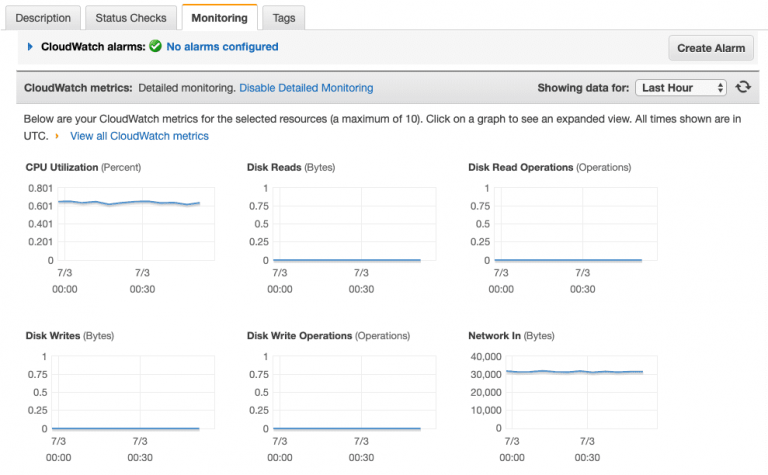

a. The quickest way to check if the EC2 instance is correctly specified is to use the AWS console. You can click the Monitoring tab while viewing the instance.

Underutilized instance

b. For a more scientific view, Amazon provides a free tool that analyzes two weeks of utilization data to make right-sizing recommendations for EC2 instances.

The potential savings are enormous, but right-sizing is not always simple, for many reasons. It is very hard to source quality recommendations — for example, memory metrics are usually not considered. Two weeks of utilization data might not always be enough to make an optimal recommendation.

AMIs (Amazon Machine Images) can be different in ways that might not be obvious, including their virtualization model, their disk types and their preinstalled software. These variables can affect not just performance, but even the regional availability of the AMI and software licensing. Different AMIs are often tuned for specific scenarios, such as for database-heavy applications.

2. Use Tags To Target Your Cost-Optimization

Many of the most powerful strategies you can use to reduce EC2 costs involve targeting some EC2 instances and not others. For example, only targeting:

- Production instances

- Non-production instances

- Batch-processing instances

- Rogue instances

- Instances used by analysts with security clearance in countries that have a Saturday–Wednesday work week and 8am–8pm office hours

Imagine a cost-optimization strategy that was designed for the instance that your software developers use for testing builds during office hours. Now consider what would happen if you applied that strategy to the instance that hosted your web server. At best you would leave money on the table. At worst, you would cause an outage. So, it is critically important to find the right instances to target with each strategy. Use tags to find and optimize EC2 instances in the AWS Console and the AWS API as well as in your GorillaStack rules. This allows you to reduce EC2 costs by controlling your resources using tags.

Tagging is so important — for cost optimization, but also for security, compliance and devops productivity — that GorillaStack has released an open source tool that anyone can use to tag their AWS resources.

Use it to automatically apply consistent tags to your AWS resources, completely free of charge.

3. Stop Non-Production Instances When They’re Not In Use

Suggested tags:

environment: developenvironment: stagingproduction: falseteam: financeteam: hr

If an EC2 instance is only used during office hours, then it does not need to run on weekends, when it would cost you money for no reason.

It is possible to manually start and stop instances in the AWS Console or the AWS API every day. You can also create a pair of GorillaStack rules to start and stop EC2 instances automatically. If you do so, then you get a number of nice extras.

For example, you can give the CFO the ability to click the Run Now button on the Start Finance Team Resources rule when she pops into the office on a Saturday and needs to fire up her work environment. That’s much easier than trying to raise the IT manager on the phone.

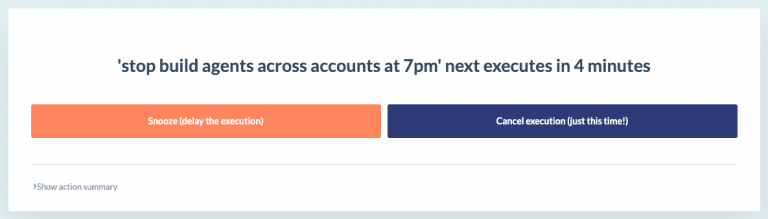

You can also alert your developers via Slack or email when their instances are soon to be stopped, so they can snooze the rule if they are working late. You no longer have to schedule shutdown at 8pm because staff sometimes work late, so you can save even more money by scheduling it for 6pm, safe in the knowledge that staff can snooze the scheduled shutdown with one click of a button.

And because it’s all codified in rules, there is no risk of anyone starting or stopping the wrong instances in the AWS Console. Plus, you reduce your EC2 costs with ease.

Snooze Execution in a GorillaStack Rule

4. Scale Production Instances In Response To Customer Demand

Suggested tags:

environment: productionproduction: trueteam: ecommerceapplication: website

According to AWS, Auto Scaling is a tool that monitors your applications and automatically adjusts capacity to maintain steady, predictable performance at the lowest possible cost.

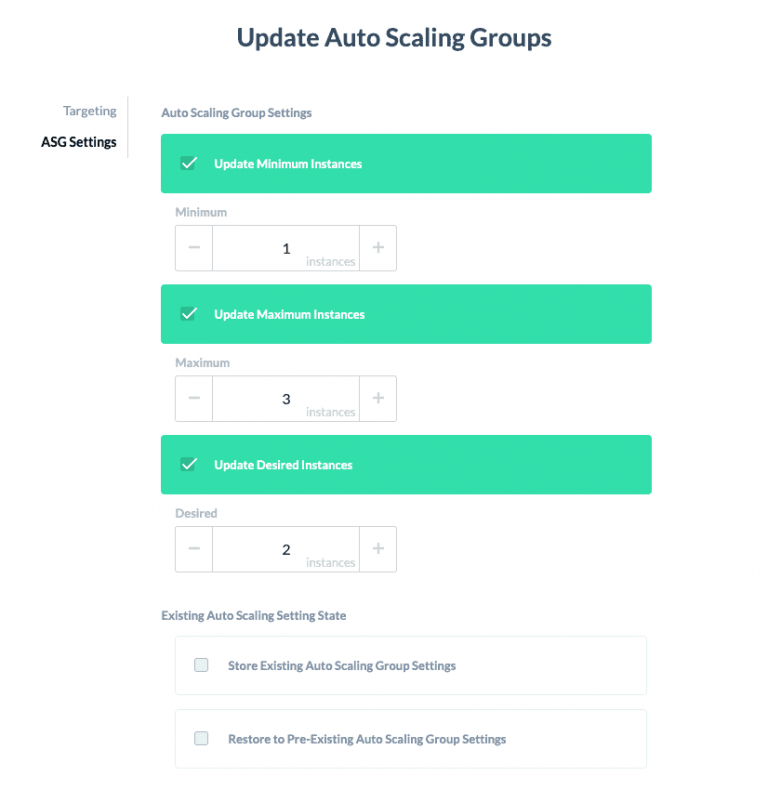

All your production EC2 instances (and many of your other instances) should definitely be in AWS Auto Scaling groups. To maximize performance and minimize cost, you will want to adjust the minimum, maximum and optimal number of instances that an Auto Scaling group deploys. You can do this manually, using the AWS Console or the AWS API. Or you can automate the AutoScaling process by putting it in a GorillaStack rule that runs on a schedule or that you can permit key staff to run with the click of a button:

Updating AWS AutoScaling Groups in a GorillaStack rule

5. Automatically Terminate Rogue EC2 Instances Before They Cost You Serious Money

Suggested tags:

environment: <undefined>

AWS makes it very easy for employees to spin up EC2 instances without approval, and that can be very expensive.

Fortunately, having a solid tagging strategy makes it easy to find and stop these resources. For example, if your strategy involves tagging every resource with environment: production, environment: staging or environment: development, then it is reasonable to assume that any EC2 instance that does not have an environment tag at all was spun up without permission and should be shut down.

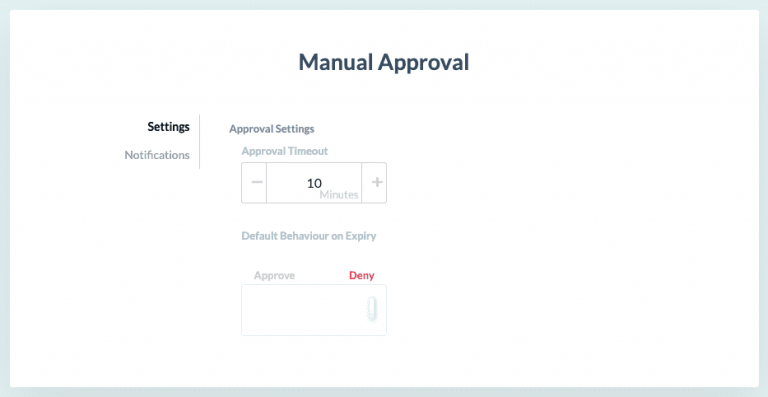

To automate the process of finding and stopping these instances, create a GorillaStack rule that terminates an instance that doesn’t have an environment tag. As a bonus, the rule can pause and request your approval before stopping any rogue instances that it has found.

Manual Approval in a GorillaStack rule

Alternatively, you could use a GorillaStack Real Time Events rule to alert you in real time when an instance of a certain size is deployed. The alert is triggered by a CloudTrail event and can be received via email, Slack, Teams or even through an API.

6. Let AWS Batch Run A Temporary Fleet Of Instances When A Job Is Ready

Suggested tags:

team: dataapplication: research

Some jobs require a team to spin up a large number of temporary EC2 instances, perform some processing, and then shut them down. For example, to compress a day’s worth of raw images, reconcile a week’s worth of transactions or train an artificial intelligence system on a new set of data. AWS has a tool designed just for this scenario: AWS Batch.

AWS Batch has two main advantages from a cost-optimization point of view. First of all, starting and stopping a fleet of resources when required is obviously cheaper than running those EC2 instances continuously. But also, AWS Batch lets you bid for Spot Instances (see below) as part of the fleet that it starts and stops on your behalf.

7. Use Spot Pricing To Secure Temporary Instances For Less

Suggested tags:

pricing: spot

When you fire up an EC2 instance, by default it is ‘on demand’. This means you pay the ticket price and you can use it for as long as you require. However, there is also the opportunity to use spot pricing, which can be much cheaper. That is, if you can tolerate AWS taking the instance back again when it requires with two-minute’s notice. Theoretical savings can be up to 90%. Though, you should investigate the Savings Summary in Spot Requests in AWS EC2 to confirm the actual savings you have secured.

If the two-minute’s notice requirement is too harsh, you can purchase a one-to-six hours of guaranteed spot instance usage. With that, you still receive an estimated discount of 30—50%.

Using spot instances involves unique challenges. You have to identify workloads that can be interrupted without negative consequences. This might even involve you rearchitecting part of your application. So, more of its workflows can be processed as long lists of tiny idempotent tasks.

Since AWS introduced spot pricing, typical savings have fallen somewhat, but spot pricing has also become much easier to use. Consider EC2 Fleet (incorporating EC2 Spot Fleet) as a way of managing your use of spot pricing. You can also specify the use of spot pricing when you are setting up an AWS Auto Scaling group.

8. Use Reserved Instances To Cut The Cost Of Predictable Instance Use

Suggested tags:

pricing: reserved

At the opposite end of the spectrum, you can save considerable amounts of money by reserving an EC2 instance for one to three years. Imagine you need an instance to manage your building’s CCTV cameras.

You know the instance has to run 24/7.

You know you will need it for at least three more years.

You know the instance will have a constant workload.

It’s the perfect candidate for a Reserved Instance.

If you know you’ll need the instance for one-to-three years but you want the option of changing its specification in that period, you can purchase a Convertible Instance. These are not as cheap as pure Reserved Instances, but still offer significant savings to reduce your ec2 costs.

There is also the Reserved Instance Marketplace where you can buy or sell Reserved Instance credits that have not been fully used. This is a good way of recouping some of your losses if you reserve an instance that you later find you do not need. It can also be a good way to buy short-term capacity cheaply if you have time to wade through RI listings. Note these can be fairly thin, especially in non-US regions.

9. Replace Instances With Containers, Functions And Managed Services

Suggested tags:

application: databaseapplication: apiapplication: build

After you’ve squeezed as much as possible out of your EC2 instances, then the next big round of savings comes from rearchitecting your application so you can replace instances with containers, functions and managed services. For example, here at GorillaStack we rearchitected our back-end to use AWS Lambda for our API endpoints. As a result, we were able to decommission many EC2 instances, reducing our AWS bill by more than 70%.

Likely targets for such a change include:

API endpoints

These are typically small pieces of logic that are invoked at random intervals and do not need to maintain internal state. Instead of serving your entire API from an EC2 instance, consider rewriting each endpoint as a function that can be served for less on AWS Lambda.

Databases

Though some database services (e.g. AWS DocumentDB) are still quite pricey, using AWS RDS is usually cheaper than running the equivalent database on an EC2 instance.

Build environments

A software build environment usually consists of a guaranteed environment plus a guaranteed set of tools like headless Chrome. Depending on your software release practices, it may not be needed day-in-day-out. If that sounds like your business, then you might save a considerable amount by turning your build environment into a container and then launching it when required using AWS Fargate.

10. Use The Above-Defined Tags To Take Your Cost Monitoring To The Next Level

Accountable, effective cost-optimization has four elements:

- Tag your resources effectively using the open source GorillaStack Auto Tag tool

- Apply a unique cost-optimization strategy for each group of instances, identified by their tags

- Use the AWS Console or the AWS API every time you want to implement each of those strategies — or, use GorillaStack to create rules that take care of all this forever

- Use the same tags in AWS’s Billing Management Console to take your cost monitoring and allocation to the next level

Without tags, the Billing Management Console does a great job of showing you how many instance-hours you used in the last month and what that cost you. But with a tagging strategy in place, you can use the console’s Cost Allocation Tags page to monitor the exact costs associated with each tag and track the effectiveness of all the targeted cost-optimization strategies you have put in place.

11. 2021 Bonus Update – Savings Plans To Reduce EC2 Costs

Since this article was written, AWS has launched Savings Plans as a way to prepay for compute without committing to an Instance Type.

There are 2 types of Savings Plans:

- Compute Savings Plans – no commitment required to instance type, family or region. They can also be applied to Fargate and Lambda.

- EC2 Savings Plans – you’re required to commit to the same family in the same region.

Similar to Reserved Instances, the plans can save you up to 72% – you should read more about AWS Savings Plans vs Reserved Instances to drill into the detail.

First published on 16 July 2019 by Steven Noble.

Updated on 14 January 2021 by Oliver Berger.